Neural Architecture Search for Bird-Eye-View Perception Models for Automotive Applications

- Type:Masterarbeit

- Date:ab 04 / 2025

- Tutor:

Neural Architecture Search for Bird-Eye-View Perception Models for Automotive Applications

Context

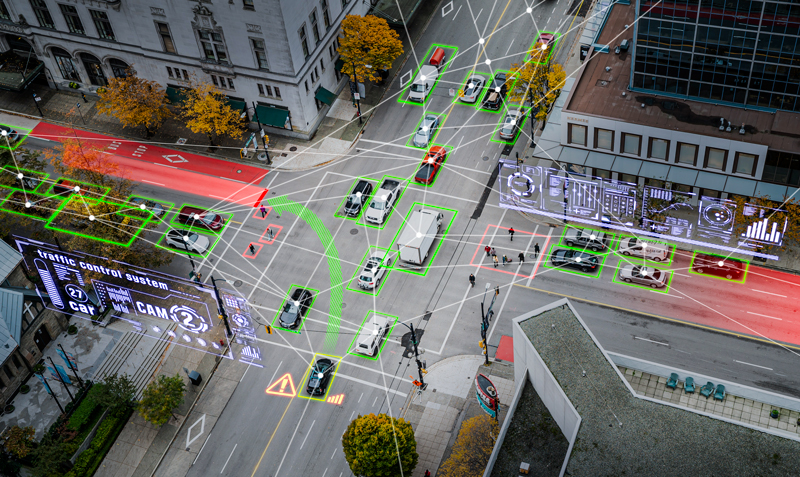

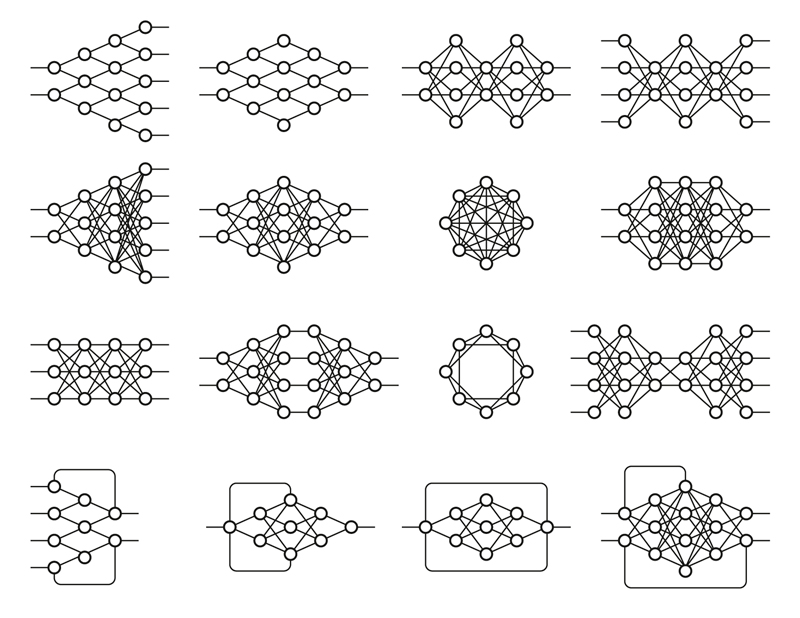

Neural Architecture Search (NAS) has been a powerful tool for automating the design of DL models by optimizing the architectures of the NNs achieving better efficiency and performance. Meanwhile, Bird's-Eye-View (BEV) perception models [1] have become essential in automotive applications, enabling robust scene understanding for autonomous driving and Advanced Driver-Assistance Systems (ADAS). This thesis will utilize NAS to BEV perception models aiming to find architectures that can be used in embedded systems.

Targets

- In this work state-of-the-art (SOTA) in NAS and BEV approaches will be researched.

Based on these results a NAS framework tailored for searching optimized BEV architectures will be designed and implemented.

The performance of discovered models will be evaluated using datasets for autonomous driving on the embedded GPU platforms and compared to SOTA models.

Requirements

Experience with Deep Learning (DL) and one of the DL Frameworks like PyTorch or Keras/Tensorflow

Experience with Python and Computer Vision